LATEST POSTS

RoboTech Vision has been granted a national defense project funded by MDSR

RoboTech Vision has been granted a national defense project funded by MDSRFebruary 27, 2023 | News After RoboTech Vision was selected by the Ministry of Defense of the Slovak Republic to present its solutions at the V4 security discussions last...

RoboTech Vision had the best results at the negotiations of the V4 countries’ armed forces

RoboTech Vision had the best results at the negotiations of the V4’ armed forcesJune 23, 2022 | Presentation The Chiefs of Staff of the V4 countries participated in security negotiations and attended the Innovation Challenge Day in Hungary....

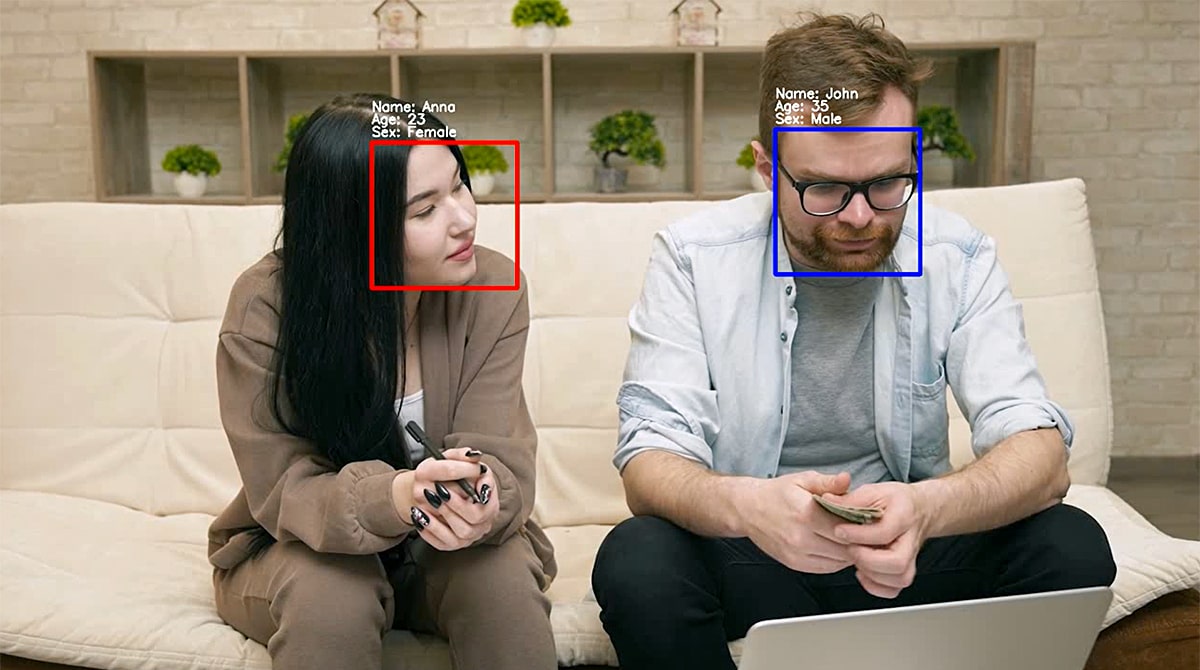

Examples of artificial intelligence use: The robot recognizes faces and distributes drinks

Examples of AI use: The robot recognizes faces and distributes drinksMarch 23, 2022 | Development Artificial intelligence has a wide range of uses. It can help people to sort data, help with image processing and automate various other processes....